Doomerism: Why Every Generation Thinks the End Is Near

Every generation seems convinced it is living at the edge of catastrophe. The details change, but the pattern does not. In the 1950s, it was nuclear annihilation. In the 1970s, experts warned of mass starvation and societal collapse from overpopulation. In the late 1990s, Y2K was expected to cripple global infrastructure. Today, the dominant fear is artificial intelligence — not just job disruption, but existential risk.

None of these fears were necessarily irrational, however. Most historical doom scenarios were rooted in real risks. Nuclear weapons could have destroyed civilization. Poorly designed software systems could have caused widespread infrastructure failure during Y2K. AI systems could create major societal disruption. But history shows that humanity usually adapts faster than our fears predict.

The challenge is learning the difference between legitimate caution and civilizational panic.

Why Societies Repeatedly Fall Into Doomer Thinking

Doomerism tends to emerge during periods of rapid change. Humans evolved in relatively stable environments, where the future looked somewhat like the past. Technological acceleration breaks that psychological model. When systems become too large, interconnected, or complex to intuitively understand, people lose confidence in their ability to predict outcomes.

That uncertainty creates fertile ground for catastrophic thinking.

There is also a strong media incentive structure behind doomerism. Fear captures attention better than stability. Predictions of collapse spread faster than predictions of gradual adaptation. A headline that says “AI May Eliminate Humanity” will always outperform one that says “AI Will Probably Create Regulatory and Labor Challenges Over Several Decades.”

Socially, doom narratives also create identity and meaning. Throughout history, people have wanted to believe they are living through uniquely important times. Apocalyptic thinking gives ordinary life a dramatic framework. It transforms uncertainty into a story with heroes, villains, and stakes.

And importantly, humans are terrible at forecasting nonlinear adaptation. We often assume technology changes while society remains static. In reality, institutions, regulations, markets, and cultural norms evolve alongside technological disruption.

That is why most doom predictions overestimate short-term collapse while underestimating long-term transformation.

The Fear Behind Y2K

By the late 1990s, Y2K became one of the most widespread technological fears in modern history. I experienced this era firsthand while completing my MBA in the late 90s, where one of my final projects involved helping a company market its Y2K remediation services. At the time, the fear felt very real — not because people believed computers would suddenly become self-aware, but because modern society was already deeply dependent on fragile, interconnected software systems that few fully understood.

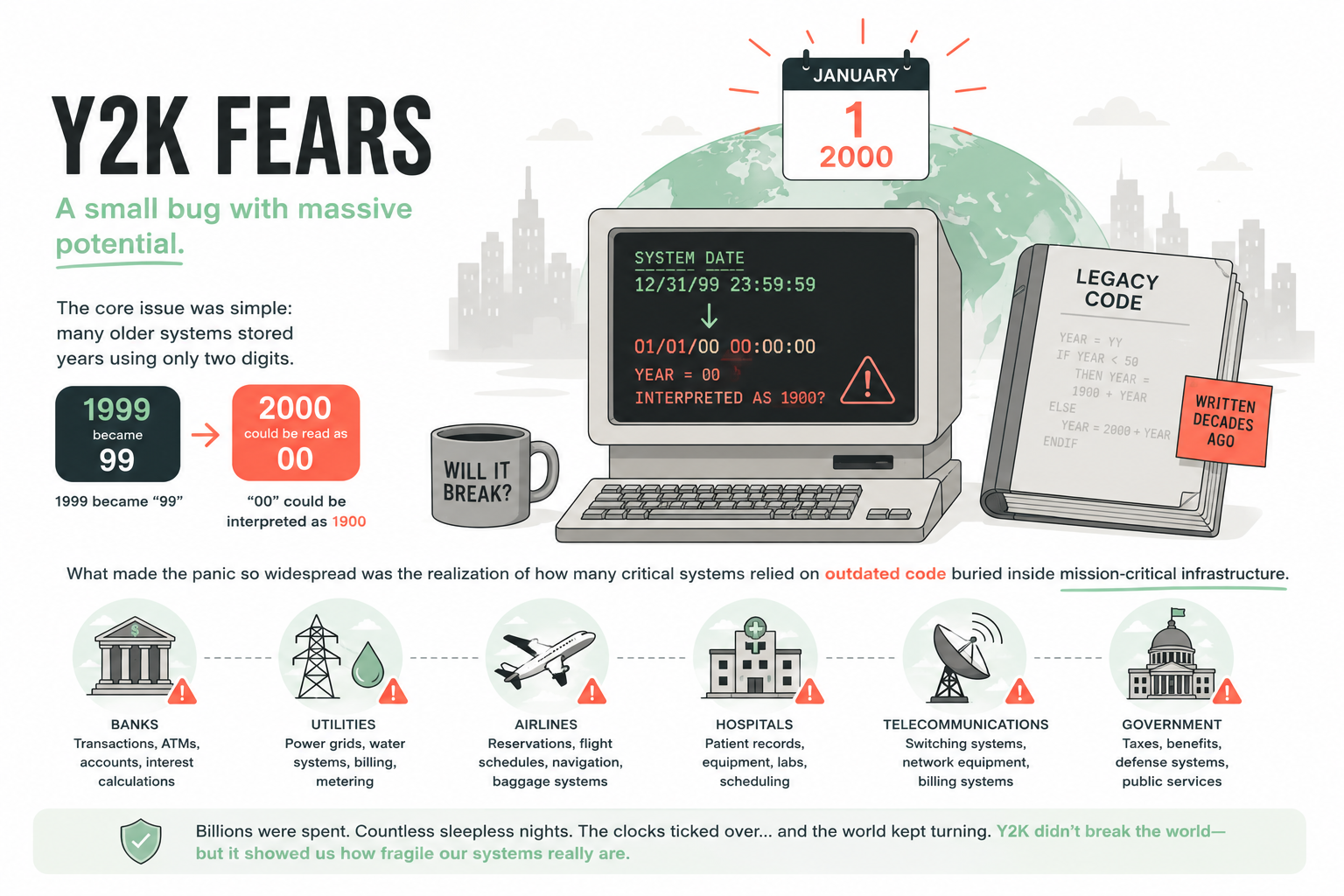

The core issue was simple: many older systems stored years using only two digits. “1999” became “99,” and there was concern that “00” would be interpreted as 1900 instead of 2000. But what made the panic so widespread was the realization of how many critical systems relied on outdated code buried inside banks, utilities, airlines, hospitals, telecommunications networks, and government infrastructure.

What stood out during that period was how uncertainty itself fueled the fear. Nobody could confidently say which systems would fail, how failures might cascade, or whether society had enough time to fix everything. Consulting firms, software vendors, and remediation companies exploded overnight as organizations rushed to audit decades of technical debt hidden beneath their operations.

Experts warned about banking failures, power grid outages, grounded flights, broken supply chains, and corrupted financial records. Some people stockpiled food and generators. Others predicted temporary disruption. A smaller group believed civilization itself was dangerously vulnerable to technological collapse.

Then January 1st, 2000 arrived — and basically nothing happened.

But the interesting lesson is that Y2K was not entirely irrational hysteria. Massive global remediation efforts likely prevented many failures before they ever occurred. The fear was rooted in legitimate systemic risk. What proved inaccurate was the assumption that society would remain passive and unprepared in the face of that risk.

How Y2K Compares to AI Doom

The parallels between Y2K and AI fears are striking. Both emerged from technologies most people do not fully understand. Both involve systems deeply embedded into society. Both create fears of cascading consequences across industries and institutions. And both involve experts publicly disagreeing about the severity of the threat.

Today’s AI fears range from realistic concerns to science fiction-level speculation. Some predictions are grounded and practical. Others assume runaway scenarios where AI rapidly escapes human control and surpasses civilization itself. Like previous doom eras, the most extreme predictions dominate public attention because they are emotionally powerful.

But AI differs from Y2K in one very important way.

Why AI Doom Is Different

Y2K was fundamentally a technical bug. AI is a transformational capability. That distinction matters.

AI is not simply a single problem to fix before a deadline. It is a continuously evolving technology that affects economics, warfare, labor markets, education, creativity, and governance simultaneously.

The deeper concern around AI is not necessarily killer robots or Hollywood-style apocalypse. The more realistic concern is gradual systemic destabilization. And unlike Y2K, there may never be a single moment where society says, “The problem is solved.”

AI will likely become an ongoing governance challenge — more similar to the internet, nuclear energy, or financial systems than a one-time crisis.

That said, this does not automatically validate the most catastrophic predictions.

Historically, humans adapt remarkably well to disruptive technologies. The printing press destabilized religious authority. Industrialization disrupted labor markets. The internet transformed communication and commerce. Every major technological leap created fear alongside opportunity.

AI will almost certainly follow a similar pattern: disruption first, normalization later.The future is unlikely to look like either extreme utopia or total annihilation. It will probably look messy, uneven, regulated, commercialized, politicized, and deeply human.

Why AI Doom Will Probably Pass Like Previous Doomer Eras

One of the strongest arguments against extreme AI doom is that societies rarely remain passive in the face of emerging risk. When threats become visible, institutions react.

Governments regulate. Markets adapt. Consumers change behavior. Engineers build safeguards. Competing incentives create balancing forces. Public pressure reshapes norms

None of this guarantees perfect outcomes. But history suggests humanity is far more adaptive than doom narratives assume. Doomerism often imagines technological change happening in isolation. In reality, civilization co-evolves with technology. Social systems bend around disruption, sometimes clumsily, but usually successfully.

That does not mean AI risk is imaginary. It means collapse is not inevitable.

What Society Should Actually Be Watching

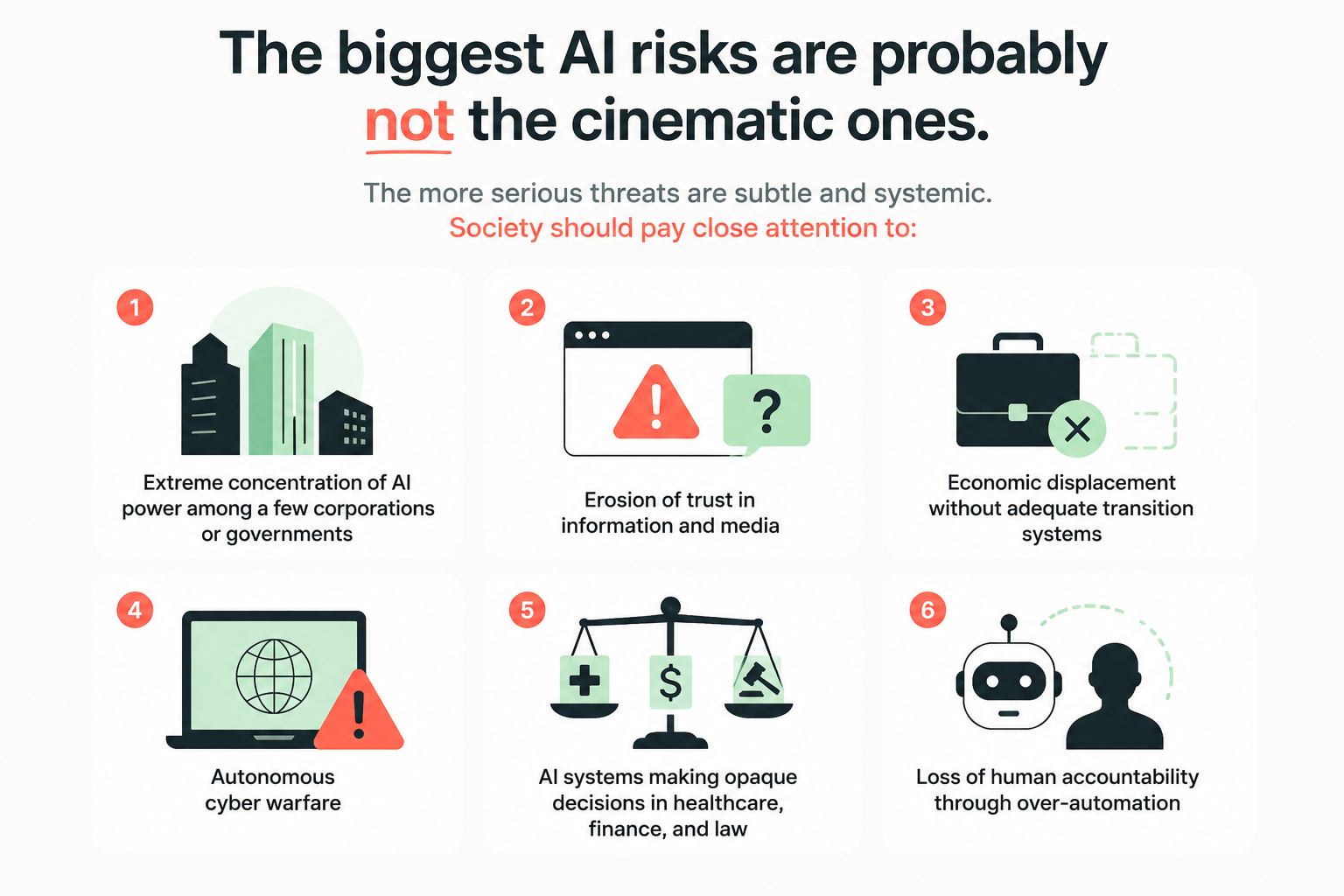

The biggest AI risks are probably not the cinematic ones. The more serious threats are subtle and systemic. Society should pay close attention to:

Extreme concentration of AI power among a few corporations or governments

Erosion of trust in information and media

Economic displacement without adequate transition systems

Autonomous cyber warfare

AI systems making opaque decisions in healthcare, finance, and law

Loss of human accountability through over-automation

Most importantly, society must avoid treating AI systems as inherently objective or wise. AI reflects incentives, training data, and human design choices. Misalignment often comes less from malicious AI and more from flawed human incentives embedded into systems at scale.

The real challenge is not surviving evil superintelligence tomorrow. It is building institutions capable of responsibly governing increasingly powerful technologies over decades.

That requires less panic and more competence. Less apocalyptic thinking and more thoughtful, long-term stewardship. History shows that humanity has repeatedly adapted to periods of technological fear and disruption, and I believe we can do the same with AI — especially if governments, technologists, businesses, and society work together to shape its development responsibly rather than react to it fearfully.